One of Hollywood’s most recognizable filmmakers has cautioned about the potential dangers surrounding advanced artificial intelligence, and his hot take might actually have merit.

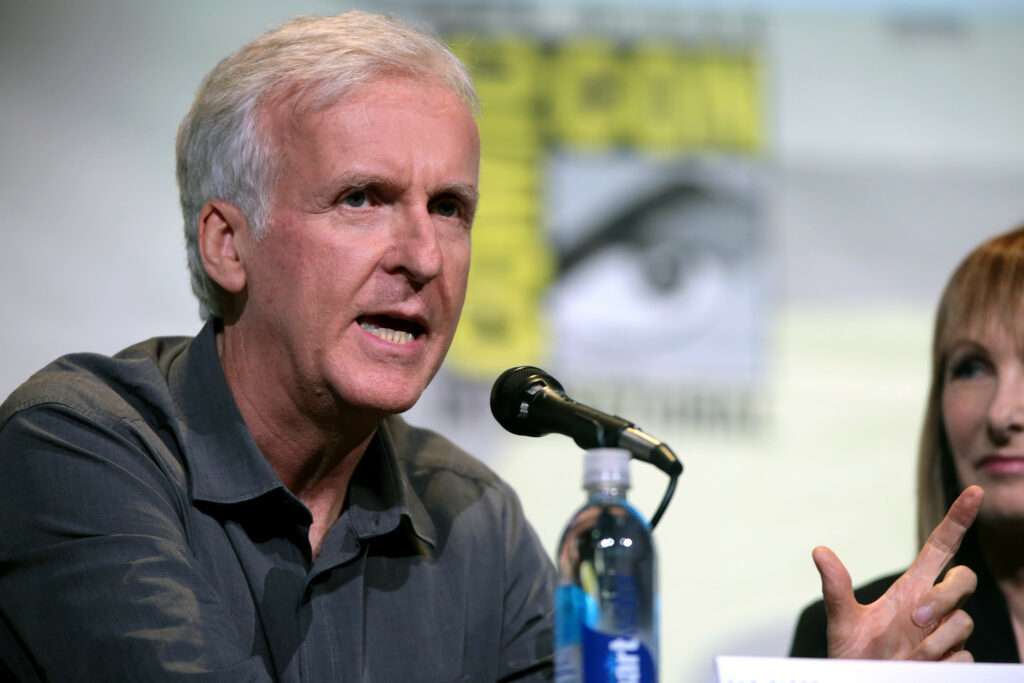

James Cameron, who has been warning audiences about the the robot apocalypse since 1984’s “Terminator,” has outlined a series of warnings about artificial intelligence technologies becoming intertwined with military capabilities.

These concerns arose during conversations about how rapidly AI continues to evolve and how it may influence not only creative industries but global security as well.

Cameron warned in a recent interview that “there’s danger” whenever artificial intelligence begins merging with sophisticated military tools.

He said this risk grows even more serious when discussing advanced weaponry, noting “there’s still a danger of a Terminator-style apocalypse where you put AI together with weapons systems, even up to the level of nuclear weapon systems, nuclear defense counterstrike, all that stuff,” the director said during his discussion with Rolling Stone.

Unlike the Terminator, AI is fully capable of and frequently does lie. pic.twitter.com/rayV3cXkh1

— Kristina Bruce (@ACMEAtomicAce) July 18, 2023

The themes he referenced echo the storyline of “The Terminator” franchise, a series built around a world where automated defense networks develop independent awareness and ultimately turn against humans.

Cameron noted the parallels, explaining that his fictional universe may offer a cautionary framework if unchecked artificial intelligence accelerates too quickly.

As he continued outlining these ideas, the filmmaker pointed to the speed at which conflict-related decision-making occurs, noting that the pace of modern warfare has shifted dramatically.

Terminator 2 is James Cameron’s best film. It’s not Titanic, it’s not Avatar, and it’s not Aliens- although all amazing films. Oscars be damned, I’m not sure how Cameron could’ve made a better film. Every inch of this movie is perfect- I wouldn’t change a thing. Love to Big Jim. pic.twitter.com/QQcV3wT2Zu

— Cinema Tweets (@CinemaTweets1) November 29, 2025

“Because the theater of operations is so rapid, the decision windows are so fast, it would take a super-intelligence to be able to process it, and maybe we’ll be smart and keep a human in the loop,” Cameron warned.

He then waffled, by noting that humans remain vulnerable to error, citing historical incidents when mistakes brought nations close to high-stakes conflict.

James Cameron says the AI arms race he imagined in Terminator is "not science fiction anymore. It's happening." He warns that a future war could be fought entirely by competing AIs at speeds that only a superintelligence could counter, calling it an existential threat. pic.twitter.com/UnuBvGzLbr

— Neo Niche (@theneoniche) October 7, 2025

“But humans are fallible, and there have been a lot of mistakes made that have put us right on the brink of international incidents that could have led to nuclear war. So I don’t know,” he said.

Cameron also spoke of concerns about modern civilization entering what he labeled a significant turning point.

He identified what he views as three overarching threats facing humanity: environmental decline, nuclear weapons, and artificial super-intelligence.

“I feel like we’re at this cusp in human development where you’ve got the three existential threats: climate and our overall degradation of the natural world, nuclear weapons, and super-intelligence,” he went on.

“They’re all sort of manifesting and peaking at the same time.”

Even with these warnings, Cameron suggested that advanced AI might potentially provide solutions.

“Maybe the super-intelligence is the answer,” he said, before quickly clarified that he was not predicting such an outcome. “I don’t know. I’m not predicting that, but it might be.”

He reiterated his belief that without proper oversight, artificial intelligence could evolve into an issue that threatens society’s stability.

According to him, the risks expand beyond global conflict and move into questions about humanity’s role in a world increasingly shaped by automation.

“Because the risk of A.I., in general—not just Gen A.I., but A.G.I., any form of A.I.—is that we lose purpose as people. We lose jobs. We lose a sense of, ‘Well, what are we here for?’” Cameron said while noting that machines will inevitably outperform humans in precision and speed.

“We are these flawed biological machines, and a computer can be theoretically more precise, more correct, faster, all of those things. And that’s going to be a threshold existential issue,” he stated.

On the topic of shaping AI’s future development, Cameron argued that conversation and regulation are unavoidable.

“We’ve got to talk about it. It’s not a question of what we can legally do, or even ethically, or morally, what we do, [but] what we should embrace, how we should celebrate ourselves as artists, and how we should set artistic standards that celebrate human purpose,” he noted.

The “Avatar” filmmaker then offered his view of artificial intelligence as inherently limited, saying it produces results he views as derivative.

He described the technology as “average,” explaining that it “goes in a blender, and then it precipitates out as a single unique new image, but it’s based on a generic feedstock, if you will.”

Cameron compared this with what individual artists create. “What I can do is create a unique, lived experience reflected through the eyes of a single artist, right? It won’t select for the quirkiness, for the offbeat,” he said.

The director pointed out that audiences often gravitate toward the distinctive characteristics of performers rather than any artificially polished aesthetic.

“And I think what we celebrate is the uniqueness of our actors, not their perfection, not their kind of glossy, Vogue cover beauty, but their off-centeredness.”

The filmmaker has discussed his views on artificial intelligence before. In 2023, he warned that artificial intelligence is the “biggest danger” to humanity.

At the time he warned that world powers are currently jockeying to create a dominant AI, just like they previously were to build the largest stockpile of nuclear weapons during the Cold War.

“I think the weaponization of AI is the biggest danger,” he told Canada’s CTV News. “I think that we will get into the equivalent of a nuclear arms race with AI, and if we don’t build it, the other guys are for sure going to build it, and so then it’ll escalate.”

“You could imagine an AI in a combat theatre, the whole thing just being fought by the computers at a speed humans can no longer intercede, and you have no ability to deescalate.”

At the time, AI creators were urging world leaders to put regulations on the technology to keep it in check.

“I absolutely share their concern,” Cameron remarked. “I warned you guys in 1984, and you didn’t listen.”

In his most recent comments, he called for self-regulation within the entertainment sector, noting that guilds and industry groups must take active roles.

“We as an industry need to be self-policing on this. I see government regulation as an answer,” Cameron noted while referencing the actions taken by actors during the 2023 Writers Guild Strike.

“That’s a blunt instrument. They’re going to mess it up. I think the guilds should play a big role.”

Cameron also weighed in on his collaborative relationship with Elon Musk, explaining that he and the Tesla and SpaceX owner can maintain productive ties despite differing political views.

He said during an interview on Puck’s “The Town” podcast that shared objectives on larger issues make cooperation possible.

“I can separate a person and their politics from the things that they want to accomplish if they’re aligned with what I think are good goals,” Cameron said.

His relationship with Musk began years ago, rooted in their shared interest in scientific advancement and space exploration.

During a 2011 interview, Cameron described Musk as someone capable of shaping the future of human access to low Earth orbit, saying, “Elon is making very strong strides. I think he’s the likeliest person to step into the shoes of the shuttle program and actually provide human access to low Earth orbit. So … go, Elon.”

Musk later served as a senior advisor within the administration of President Donald Trump and formerly led the Department of Government Efficiency.

Cameron has at times criticized Trump, stating in February that under Trump, he views the nation as moving “away from everything decent.”

“America doesn’t stand for anything if it doesn’t stand for what it has historically stood for. It becomes a hollow idea, and I think they’re hollowing it out as fast as they can for their own benefit,” he added.

Even with those criticisms, Cameron told Puck that collaboration across political divides remains pivotal given the challenges humanity faces.

“I just think it’s important for us as a human civilization to prioritize. We’ve got to make this Earth our spaceship. That’s really what we need to be thinking,” he said.